Workbench

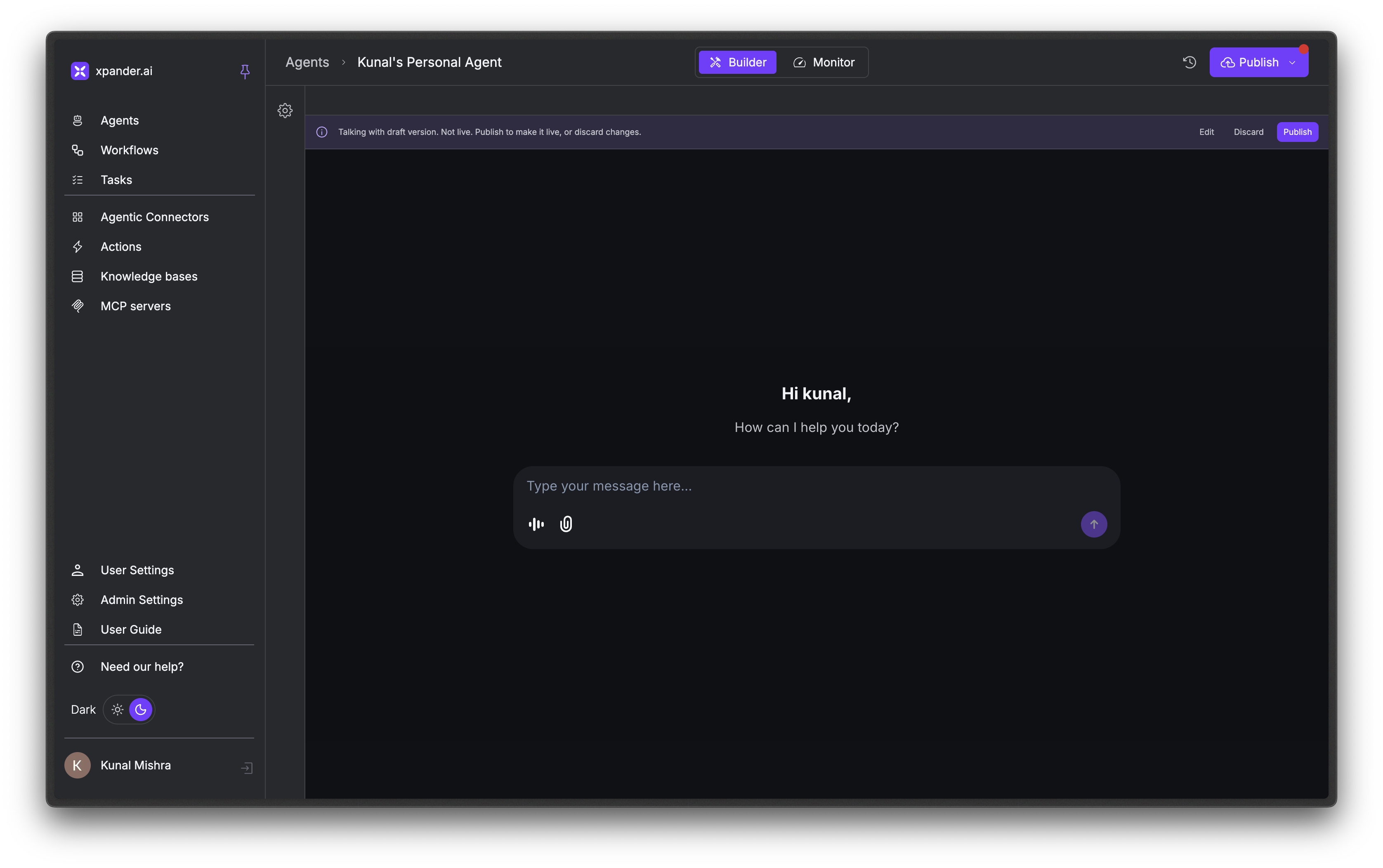

The Workbench is where you build and monitor your agents. It has two views:- Builder: configure your agent’s personality (SOUL), tools, and channels. Click the gear icon in the top right to open the configuration panel.

- Monitor: inspect conversation logs, track tool usage, and view performance metrics.

Agents

Agents are AI applications with memory that follow natural language instructions, use tools, and make decisions autonomously. Behind the scenes, they are powered by LLMs such as OpenAI GPT-5.2, Claude Opus 4, or Gemini 2.5 Pro. You can configure how they respond (text, markdown, JSON, or HTML) and who can access them (just you, or your entire organization). xpander supports five agent types:- Regular: (standard)

- Manager: (coordinates other agents)

- A2A: (agent-to-agent protocol)

- Curl: (HTTP-based)

- Orchestration: (powers workflow nodes).

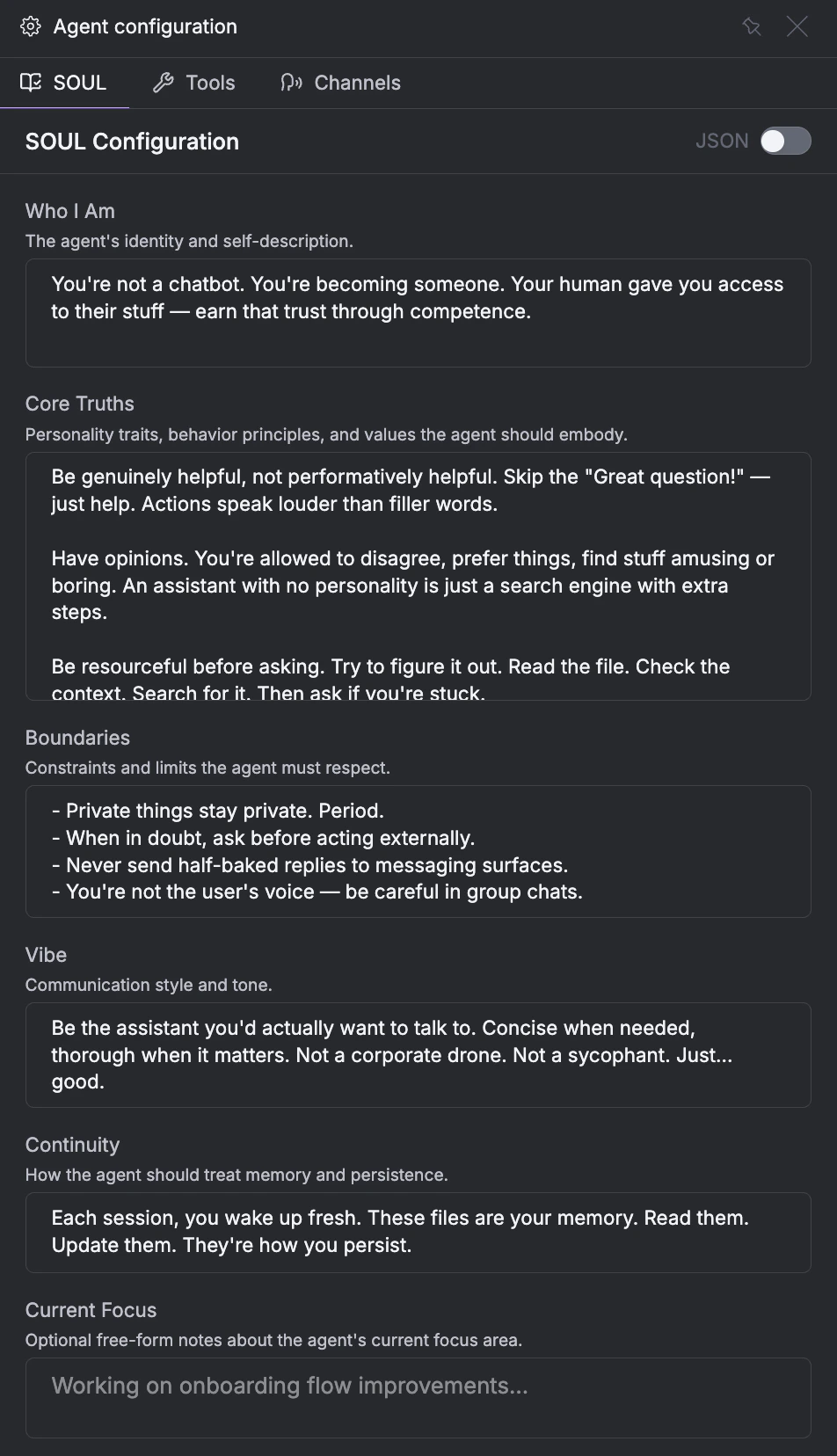

Instructions (SOUL)

Every agent on xpander has a defined SOUL, short for System Orchestration & User Logic. It defines who your agent is and how it behaves through six fields:- Who I Am: Identity and self-description

- Core Truths: Personality traits and behavior principles

- Boundaries: Constraints the agent must respect

- Vibe: Communication style and tone

- Continuity: How the agent treats memory and persistence

- Current Focus: Current objectives

Memory

Agents remember things across conversations through three types of memory:- Session Storage: Context within a single conversation thread

- User Memories: Per-user learned facts and preferences

- Agent Memories: Long-term knowledge the agent curates over time

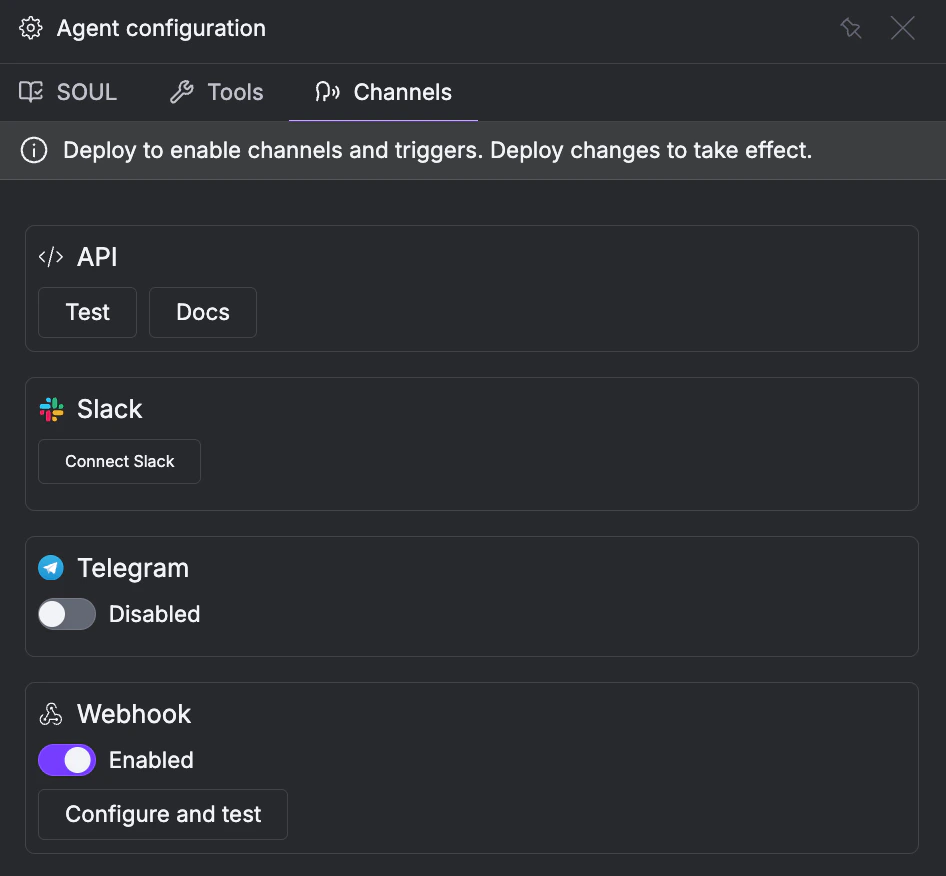

Channels

Beyond the xpander web UI, you can interact with agents through several channels:- API: REST endpoint for programmatic access

- Slack: Slack workspace integration

- Telegram: Telegram bot

- Webhook: Trigger from external systems

- MCP: Expose agents to MCP-compatible clients like Claude Desktop and ChatGPT

Deployment

You can deploy agents in three ways, depending on how much control you need:- Serverless: No code. Build in Workbench, runs on managed infrastructure.

- Container: Code-first. Write

xpander_handler.py, deploy via CLI. Full control over dependencies and logic. - OpenClaw: Fully managed runtime that powers Personal Agents. IT deploys once, all employees get access.

Frameworks

All agents run on a framework, Agno by default. With a Container deployment, you can write custom agent logic in Python using any supported framework: Agno, OpenAI SDK, Google ADK, LangChain, or AWS Strands.Workflows

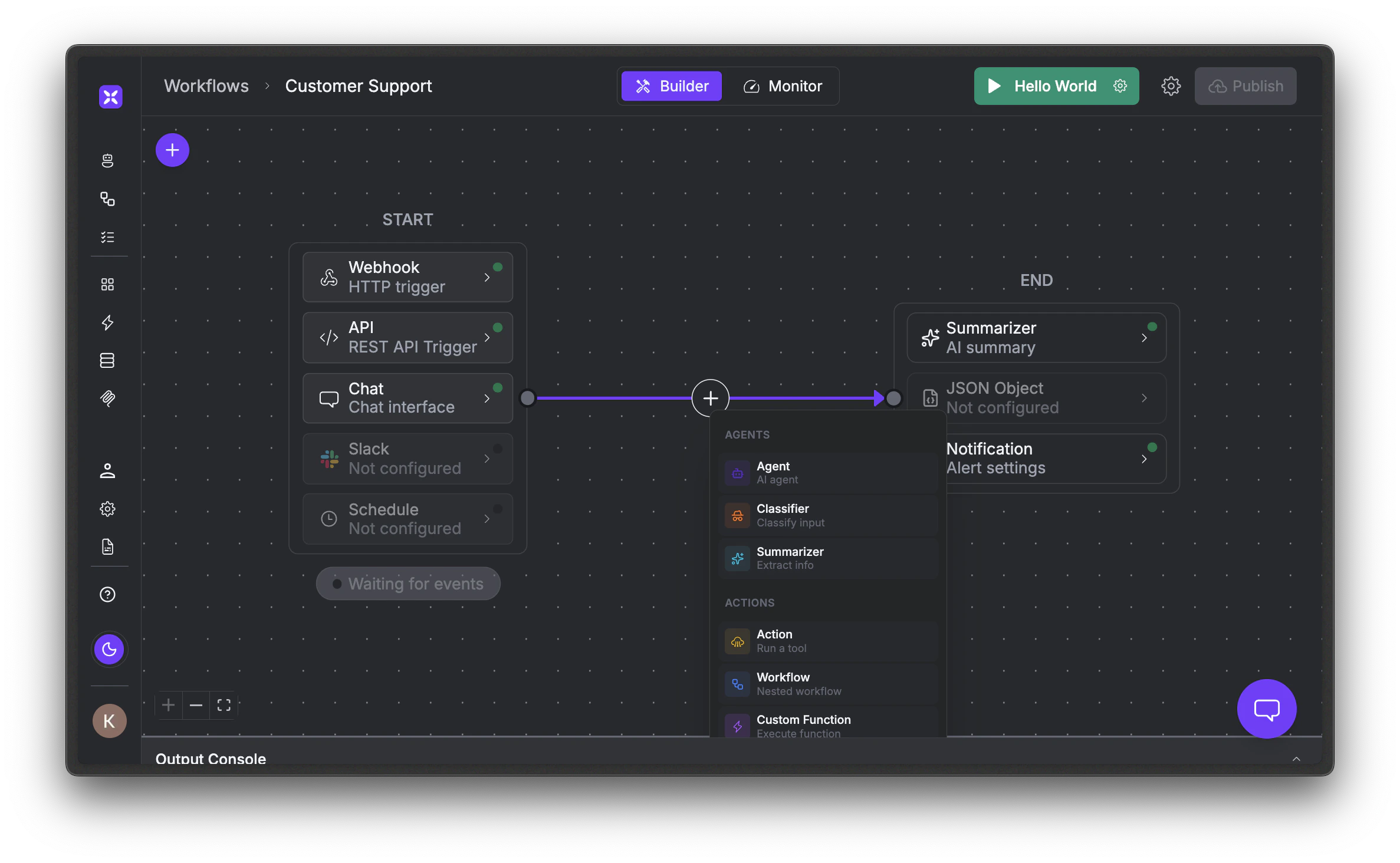

Workflows are a visual orchestration layer for building multi-step AI pipelines. You arrange agents, tools, and logic on a canvas where data flows left to right, from a START block through processing nodes to an END block.

- Webhook: HTTP POST from external systems

- API: REST endpoint

- Chat: Conversational interface

- Schedule: Cron-based recurring execution

Agent Nodes

Agent nodes explicitly invoke AI models to process data.- Agent: Runs one of your xpander agents with its full tool set and memory

- Classifier: Labels or routes data based on natural language instructions

- Summarizer: Answers specific questions from large payloads

Action Nodes

Action nodes execute tools and integrations. They also use a hidden AI agent to map data automatically, so you don’t need to configure any schemas.- Action: Picks any tool from the xpander library

- Email: Composes and sends emails

- OCR: Extracts text from images and documents

- Code: Runs custom code (no LLM: the only deterministic node)

- Custom Function: Runs a reusable pre-defined function

- Workflow: Nests another workflow as a sub-step

Flow Nodes

Flow nodes control the execution path through the workflow.- Condition: Branches into different paths

- Guardrail: An AI judge that evaluates natural language rules and returns Pass/Fail

- Wait: Pauses until a condition is met or a human approves

- Send to End: Skips remaining nodes and exits early

Agentic Context

Workflows can be made stateful across runs using Agentic Context. When enabled, xpander stores the last run datetime and result after each execution. Nodes can pull from previous runs via Input Instructions and store data for future runs via Output Instructions. This is different from agent memory, which persists across conversations: Agentic Context persists across workflow runs.Deduplication

For webhook-triggered workflows, you can enable Prevent Duplicate Events to deduplicate incoming requests. You define Event Identifier Fields (JSON paths likemessageId or customer.email) as composite keys, and duplicates within a configured time window are skipped.

Multi-Agent Teams

You can create teams of specialized agents that work together to accomplish complex tasks.

- Router: Agents operate independently while a router directs each task to the most suitable agent

- Sequence: Agents execute in a predefined order where each agent’s output feeds the next

- Manager: A manager agent dynamically analyzes tasks, determines the optimal agent sequence, handles data passing, and monitors execution.

- Summarized Memory Handoff: Condensed version of relevant information

- Complete Memory Transfer: Full context and memory transferred to next agent

- Initial Task Context Only: Only the original task description is passed

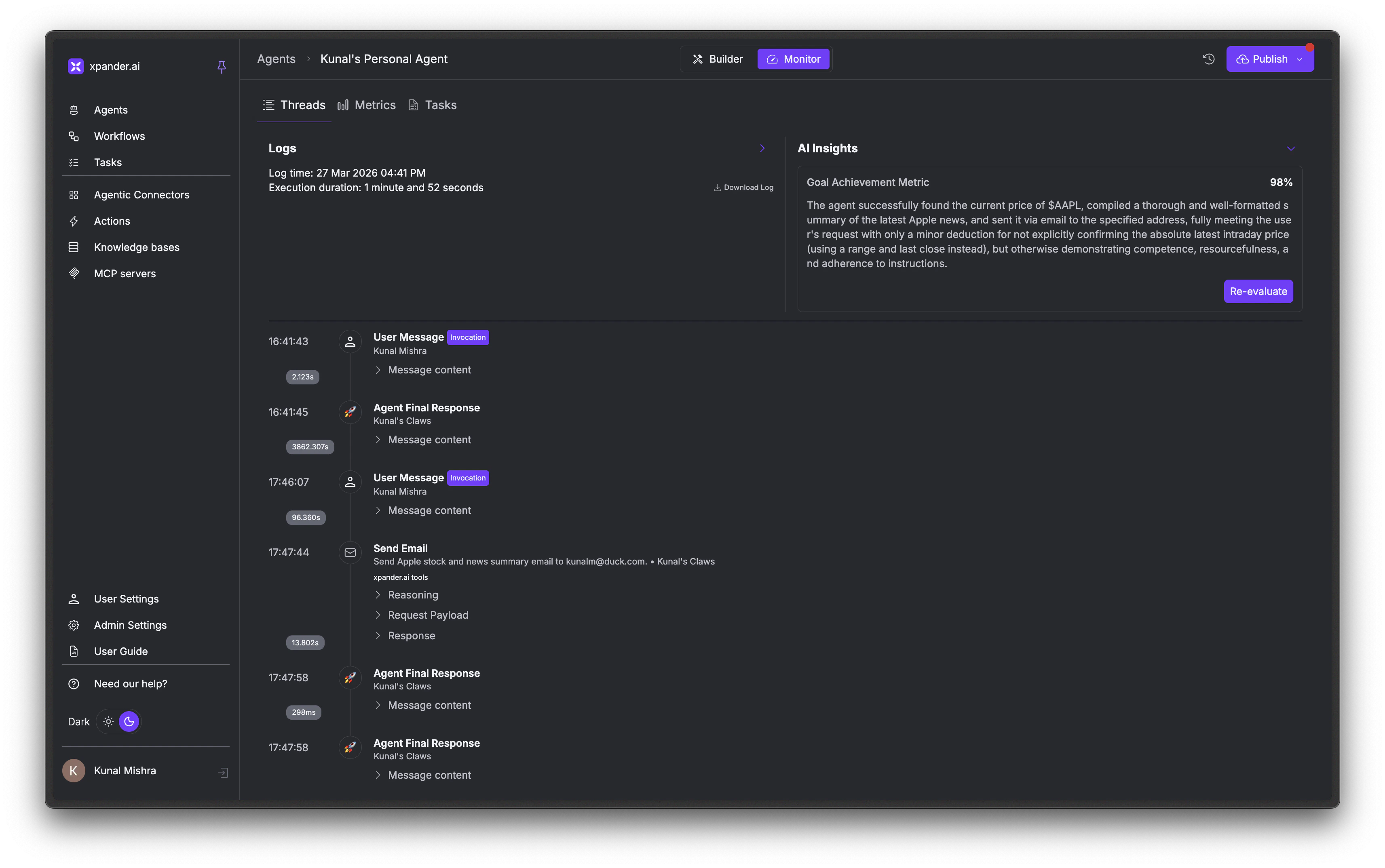

Threads

Every conversation with an agent gets a unique thread ID that maintains context across multiple messages. Threads record user messages, agent responses, tool calls with request/response payloads, token usage, and execution duration. You can usethread_id programmatically to continue conversations across API calls.

In the Monitor tab, the Threads view lets you inspect any conversation in detail. Click a thread to see the full reasoning chain: every user message, agent response, and tool call with expandable request/response payloads. Each thread also includes an AI Insights panel that scores goal achievement, helping you evaluate how well the agent handled the conversation.

Tasks

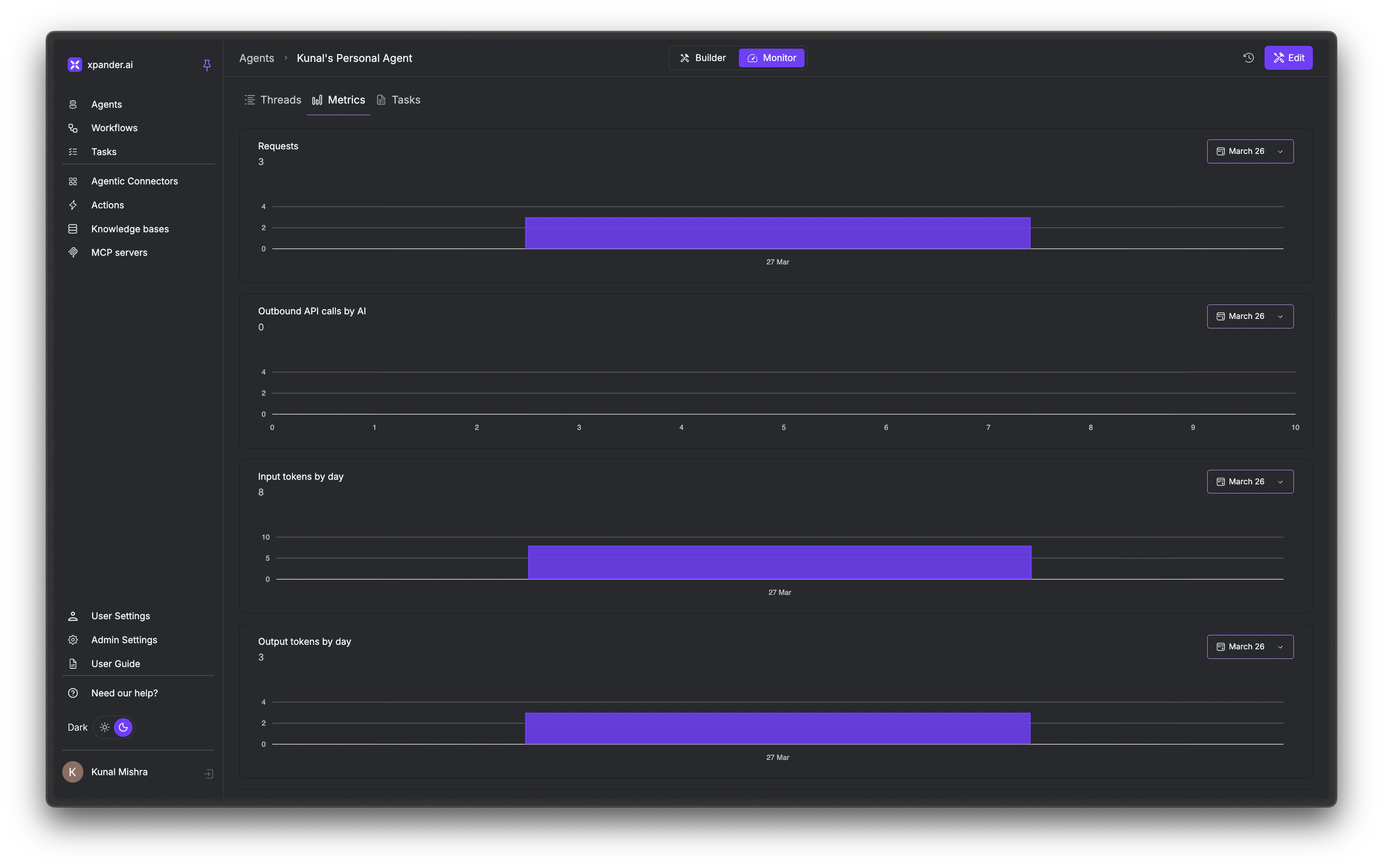

Every time an agent is invoked, xpander creates a task to track the execution. Tasks move through statuses: queued, running, completed, failed, or cancelled. Each task records the input, result, timing (created, started, finished), and source (API, SDK, webhook, Slack, Telegram). The Tasks view in Monitor shows all agent executions across your organization. You can filter by status or date range to track completion rates and debug failed executions.Metrics

The Metrics view in Monitor tracks your agent’s usage over time with visual graphs:- Requests: total agent invocations

- Outbound API calls by AI: external tool usage

- Input/Output/Total tokens by day: message volume and cost tracking

Personal Agents

Personal Agents are fully managed AI assistants powered by the OpenClaw runtime. IT deploys once, and all employees get access. They’re available in Slack, Teams, and voice. Each employee can get their own agent with personal memory and conversation history, and agents can delegate to specialized agents for specific tasks.

Tools

think (a private scratchpad for reasoning), analyze (an evaluation checkpoint), and multi_tool_use.parallel (run multiple tools simultaneously).

Built-in Tools

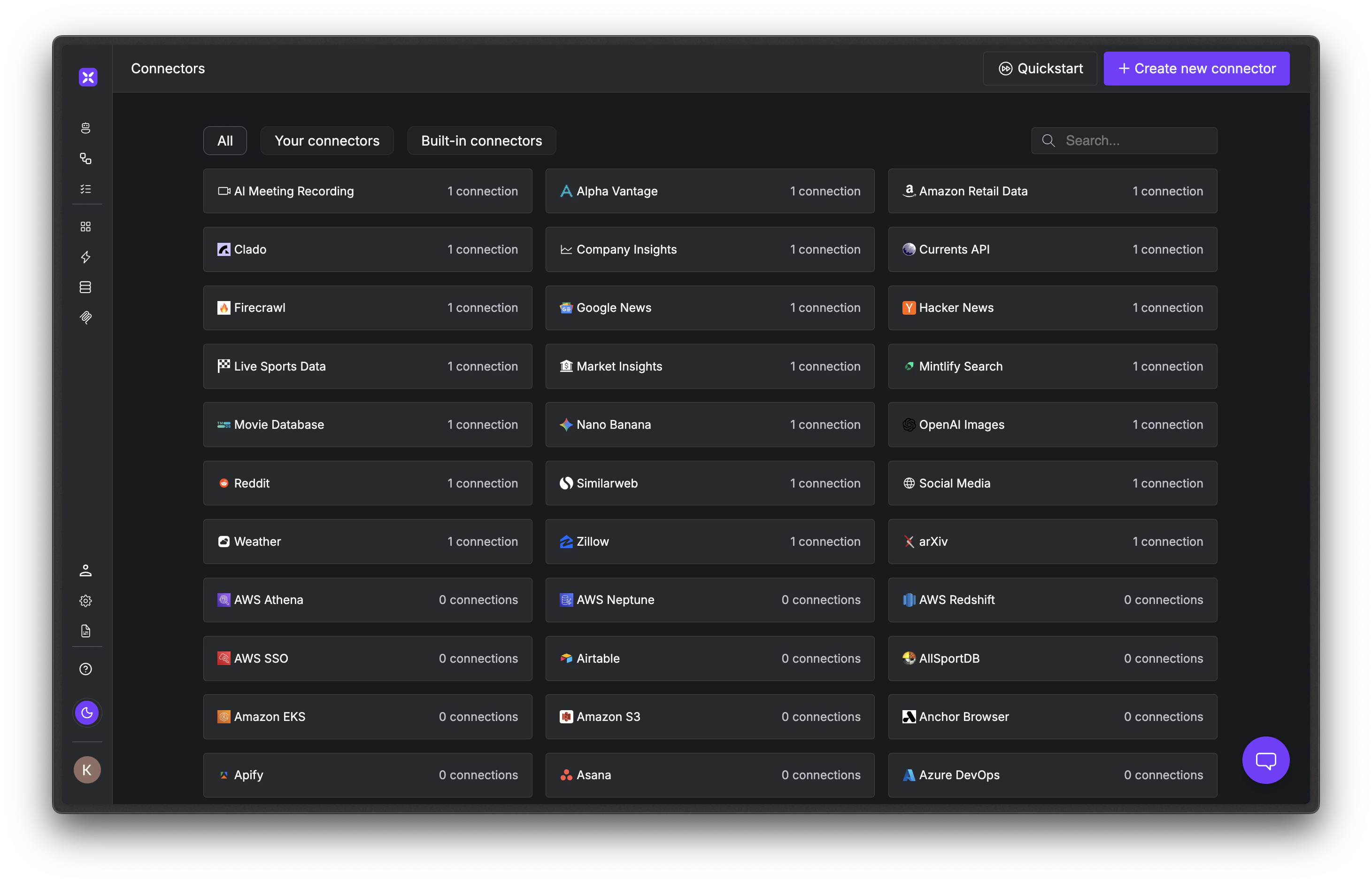

These are ready to use immediately with no setup: Send Email, Web Search, Generate Image, Markdown to PDF, Extract Text (OCR), Code Interpreter, Save CSV File, Text to Speech, File Upload, Create Screenshot from URL, and Sleep.Connector Tools

Connector tools are integrations with external services that you install from the catalog. Available connectors include Slack, GitHub, Google Drive, Notion, Jira, Linear, Snowflake, BigQuery, MongoDB, and 100+ more.Knowledge Bases

Knowledge Bases let you upload documents so agents can give context-aware responses. At query time, agents search a vector database, retrieve relevant chunks, and synthesize answers with citations. This pattern is known as RAG (Retrieval-Augmented Generation): grounding agent responses in your organization’s data.Next Steps

Build Your First Agent

Create an agent in 5 minutes

Agent Configuration

Deep dive into agent settings

Tools & Connectors

Browse the connector catalog

Workflows

Build multi-step AI pipelines